A Court Cannot Stop the Future: Why Kenya’s Anti-AI Pleadings Ruling Is Jurisprudentially Backward and Must Be Set Aside

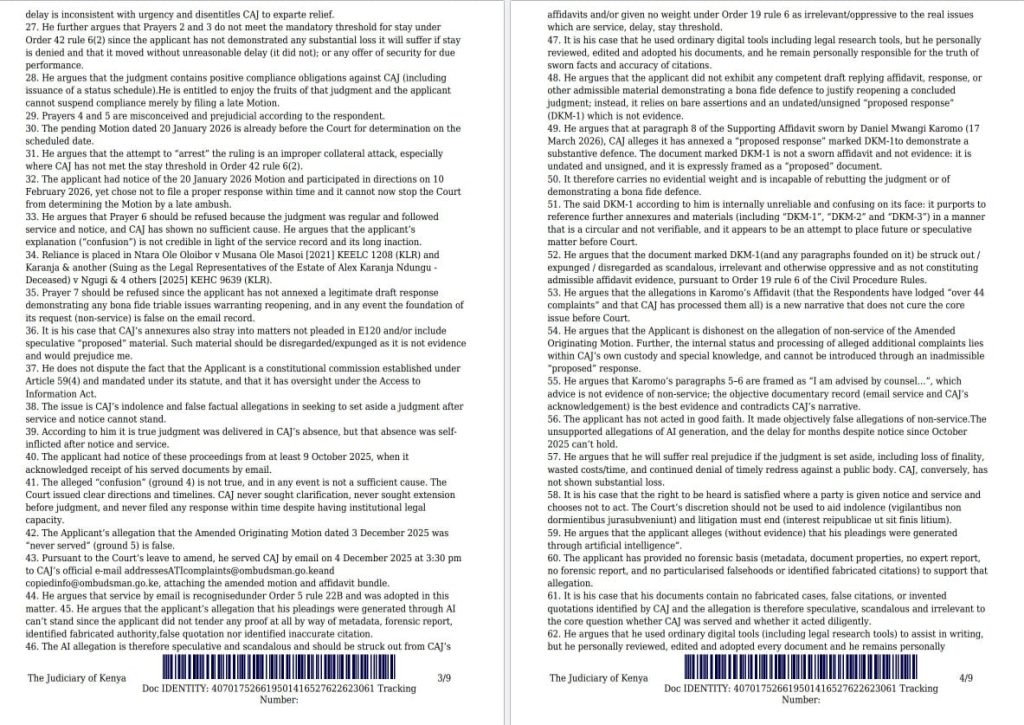

Kenya’s High Court has handed down a ruling that reads less like modern jurisprudence and more like a judicial panic attack in the face of technological change. From the screenshots provided, the court treated the undisclosed use of artificial intelligence in drafting pleadings not merely as a procedural concern, but as an abuse of process so grave that it tainted the entire decision-making chain. It then went further, effectively declaring that pleadings generated with AI cannot stand in Kenya unless and until a formal legislative or regulatory framework is created.

That approach is not bold. It is not visionary. It is not protective of justice. It is timid, overly formalistic, and deeply out of step with the direction in which courts, regulators, lawyers, and justice systems around the world are moving. The issue before the court should never have been whether AI exists, whether people are already using it, or whether the law can pretend it away. The real issue should have been this: what duties of candour, verification, accountability, disclosure, and evidentiary reliability should attach when AI is used in legal work? That was the jurisprudential opening. The court had it in its hands and squandered it.

A serious court, confronted with a first-generation AI problem, should build a first-generation AI rule. It should not try to drag the administration of justice back into the pre-digital age. The world has already moved. AI is now embedded in search, drafting, review, translation, summarisation, transcription, document management, disclosure workflows, contract analysis, legal research, and compliance systems. The ship has sailed. The only responsible legal question is how to govern that reality without undermining fairness or truth.

The ruling confuses misuse of AI with the existence of AI

The screenshots show a court deeply concerned that it could not verify the tool used, the prompts given, or the path by which the pleaded words were produced. That concern is understandable. But understandable concern is not enough to justify bad doctrine. Courts do not ban pens because a pleading contains lies. They do not ban search engines because a lawyer misquotes a case. They do not prohibit all templates because a party abused a form. Law regulates conduct, not fear.

The proper jurisprudential distinction is simple: AI misuse should attract consequences; AI use should attract duties. That is the distinction serious jurisdictions are drawing. Where a filing contains fake authorities, fabricated quotations, non-existent precedents, altered evidence, or undisclosed reliance that misleads the court, sanctions are justified. But where AI is used as a drafting aid, a language assistant, a structural editor, a research accelerator, or a document summariser, the answer is supervision, verification, disclosure where necessary, and responsibility resting squarely on the litigant or advocate who signs and files the document.

The ruling, as reflected in the screenshots, collapses that distinction. It appears to say that because the court cannot fully inspect the machine pathway, the pleading itself becomes suspect. That logic is too broad. By that reasoning, courts would have to invalidate huge ranges of technology-assisted work whose internal operations are not fully transparent to a judge at first glance. Modern litigation already relies on software ecosystems, databases, search engines, drafting tools, electronic discovery platforms, transcription tools, and automated formatting systems. The law has never demanded omniscience about the technology stack. It demands responsibility from the human being using it.

Why the “uneven playing field” argument is intellectually weak

One of the most striking parts of the ruling is the suggestion that AI-assisted pleadings create an unfair advantage in an adversarial system and therefore undermine equality before the law. That is a profoundly weak basis on which to build doctrine. The justice system has never equated fairness with forced technological uniformity. Some litigants can afford senior counsel; others cannot. Some firms use expensive research databases; others rely on open sources. Some parties deploy litigation-support teams, forensic accountants, e-discovery software, and expert knowledge systems; others appear in person. The law responds to those inequalities through procedure, disclosure, legal aid, judicial case management, evidentiary rules, and professional duties. It does not criminalise efficiency.

In fact, there is a powerful counterargument the ruling failed to engage with: properly governed AI can expand access to justice. It can help self-represented litigants structure arguments, summarise records, translate legal language into plain language, identify missing dates and annexures, and reduce drafting costs that have historically locked ordinary people out of the system. The Federal Court of Australia now expressly recognises that generative AI can facilitate the just resolution of disputes by increasing efficiency, reducing legal costs, enhancing access to justice, and improving the administration of justice.[1] That is the mature debate. The Kenyan ruling, by contrast, treats technological assistance as presumptively suspect rather than conditionally acceptable.

The danger in the court’s reasoning is obvious. Once a court frames efficiency itself as an unfair advantage, it turns innovation into a vice. That is not how modern justice systems survive. That is how they become brittle, expensive, exclusionary, and obsolete.

The judge had a chance to build Kenyan AI jurisprudence and missed it

This is what makes the ruling so frustrating. The court was standing at the frontier of a real legal problem. It could have produced a principled framework that lower courts, advocates, litigants, regulators, and the Rules Committee could build on. Instead, it reached for a blunt instrument. It chose prohibitionist language where guidance was needed. It chose anxiety over architecture.

A better ruling would have said at least six things. First, that any person filing a pleading remains fully responsible for every factual assertion, citation, authority, and submission regardless of whether AI was used. Second, that undisclosed AI use may justify case-specific sanctions where the non-disclosure materially misleads the court or prejudices another party. Third, that fabricated authorities, invented quotations, fake annexures, or unverifiable claims generated through AI amount to professional or procedural misconduct and may attract striking out, costs, referrals, or contempt-type consequences depending on the context. Fourth, that the use of AI for clerical, structural, drafting, summarisation, and research support is not inherently improper if the final filing is independently reviewed and verified. Fifth, that affidavits, witness statements, and evidence carry stricter safeguards because authenticity and personal knowledge matter in a different way. Sixth, that the judiciary and the Rules Committee should urgently issue disclosure and verification protocols.

That would have been jurisprudence. That would have been leadership. That would have acknowledged reality while preserving judicial integrity. Instead, the court seems to have implied that the absence of a Kenyan framework means AI should effectively stay out of pleadings altogether. But courts do not wait for Parliament before developing procedural discipline around new risks. They interpret duty, candour, fairness, and abuse of process every day. That is their job.

What other jurisdictions are actually doing

The global trend is not to outlaw AI in litigation. The trend is to regulate it, disclose it in appropriate settings, and sanction falsehoods. Canada’s Federal Court issued guidance in 2023 and then an updated notice in 2024 requiring parties, counsel, and interveners who use AI in preparing materials to make a declaration, while making clear that the point is transparency and the preservation of existing legal responsibilities, not a blanket ban.[2] Canada’s Federal Court also separately states that the Court itself will not use AI to make judgments and orders without public consultations, again reflecting a governance approach rather than technological denial.[3]

Singapore’s courts published a 2024 guide for court users on generative AI tools. The guide does not pretend the technology can be wished away. It explains the risks, reminds users of their duties, and expects users to assess whether such tools are suitable, especially given accuracy and confidentiality concerns.[4] New Zealand’s courts and tribunals have adopted guidelines for both lawyers and non-lawyers using generative AI chatbots in proceedings, explicitly acknowledging that use is increasing and that guidance is necessary because the judiciary remains responsible for the integrity of court processes.[5]

In England and Wales, the judiciary issued guidance in December 2023 for judicial office holders on responsible AI use in courts and tribunals.[6] In Australia, the Federal Court’s GenAI Practice Note takes a sophisticated approach: it recognises benefits, insists on accuracy, confidentiality, and compliance with professional obligations, and builds detailed constraints around use rather than pretending no use should occur.[1] Victoria’s Supreme Court likewise allows AI use subject to ordinary litigation duties, and specifically indicates that where AI use may affect the provenance or weight of a document, the use should be disclosed to the court and other parties.[7]

This is what serious legal systems do. They regulate the frontier. They do not slam the gate and call that wisdom.

Even the sanctions cases abroad do not support a total anti-AI rule

The international cases that have made headlines are not cases where courts condemned AI as such. They are cases where lawyers filed fake citations, invented authorities, or made misleading submissions after failing to verify machine output. Mata v Avianca in the Southern District of New York became famous because counsel submitted non-existent authorities and then compounded the problem, leading to sanctions.[8] That case is not authority for the proposition that AI may not be used in litigation. It is authority for a much narrower and more sensible proposition: lawyers must verify what they file and cannot hide behind a tool.

Reuters has documented the same pattern repeatedly in 2024, 2025 and 2026: courts in the United States and Australia are increasingly warning, sanctioning, or fining lawyers for unverified AI hallucinations, while still accepting that AI can be useful when used responsibly.[9] That matters. The direction of travel in comparative jurisprudence is accountability, not prohibition. Where judges are strongest, they police accuracy. Where they are weakest, they confuse technological discomfort with legal principle.

Why the ruling is vulnerable on appeal

This ruling is vulnerable because it appears to overreach beyond the actual harm demonstrated. If the problem before the court was non-disclosure, questionable drafting provenance, or concern about unverifiable machine content, the remedy should have been tailored to those defects. A court must explain why lesser interventions were insufficient. Why not order disclosure? Why not require a verifying affidavit? Why not strike only offending passages? Why not test whether any cited authority was false? Why not invite submissions on a protocol? Why not impose costs linked to the misconduct proven?

Instead, from the screenshots, the court appears to have treated AI-assisted drafting itself as contaminating the pleading and therefore the judgment. That is a drastic leap. Appellate courts are often wary of categorical procedural innovations that lack clear statutory footing, especially where they undermine access to justice, impose sweeping new burdens on litigants, or turn a case-specific problem into a general ban. If the ruling effectively invents a new disability in pleading practice without a clear rule, rule amendment, practice direction, or legislation, it raises obvious proportionality and legality questions.

It is also vulnerable because it misunderstands the constitutional stakes. Equality before the law does not require equal technological backwardness. Access to justice is not protected by forcing litigants to draft the hard way. A constitutional order should be wary of doctrines that make legal participation more expensive, more technical, and less accessible to ordinary people. If used responsibly, AI can lower those barriers. A court that ignores that dimension risks defending form while injuring substance.

The right Kenyan response is regulation, not retreat

Kenya does not need judicial fear. Kenya needs a practical AI-in-courts protocol. The Judiciary, the Rules Committee, the Law Society of Kenya, and relevant regulators should move quickly to issue interim guidance. That guidance should distinguish between prohibited uses, restricted uses, and permissible uses. It should require human verification of all authorities and factual propositions. It should require disclosure where AI materially assisted in drafting submissions, where provenance matters, or where evidentiary authenticity may be affected. It should absolutely forbid using AI to invent citations, embellish witness evidence, manufacture annexures, or obscure the true source of a statement. It should preserve confidentiality duties. It should empower judges to issue case-specific directions. And it should make clear that the signer owns the document.

That is the path of intelligent adaptation. It is the path other jurisdictions are already taking. It is the path Kenya should have begun building from this very case. Instead, the ruling points the wrong way. It tells a country living in the age of machine assistance that its courts would prefer to act as if the calendar still reads 1900. That is not prudence. That is institutional hesitation dressed up as doctrine.

Conclusion: this decision should be set aside

This decision deserves to be challenged, criticised, and ultimately set aside. Not because courts should surrender to AI. Not because every use of AI is safe. And certainly not because verification no longer matters. It should be set aside because it chooses the wrong legal target. The danger is not AI by itself. The danger is falsehood, non-disclosure that misleads, weak verification, compromised evidence, and careless filing. Those are human failures, even when a machine is involved.

Kenya’s courts should be writing the rules for trustworthy AI use in justice, not staging a rear-guard war against technological reality. A jurisprudence that punishes the existence of a tool instead of disciplining its abuse will age badly, and quickly. The better future is obvious: regulate the use, preserve the duty, punish the fraud, and keep the doors of justice open to the technologies that can make justice cheaper, faster, clearer, and more accessible.

History is usually unkind to institutions that mistake change for chaos. The wiser response is to shape change before change shapes you. Kenya’s High Court had that chance here. It should not be the appellate courts that remind it of that basic truth. But if that is what it takes, then that is what should happen.

Related Content: The Judiciary on Trial: Why Edwin Dande’s Petition Against Justice Alfred Mabeya Matters To Every Kenyan

About Steve Biko Wafula

Steve Biko is the CEO OF Soko Directory and the founder of Hidalgo Group of Companies. Steve is currently developing his career in law, finance, entrepreneurship and digital consultancy; and has been implementing consultancy assignments for client organizations comprising of trainings besides capacity building in entrepreneurial matters.He can be reached on: +254 20 510 1124 or Email: info@sokodirectory.com

- January 2026 (220)

- February 2026 (248)

- March 2026 (287)

- April 2026 (208)

- May 2026 (155)

- January 2025 (119)

- February 2025 (191)

- March 2025 (212)

- April 2025 (193)

- May 2025 (161)

- June 2025 (157)

- July 2025 (227)

- August 2025 (211)

- September 2025 (270)

- October 2025 (297)

- November 2025 (230)

- December 2025 (220)

- January 2024 (238)

- February 2024 (227)

- March 2024 (190)

- April 2024 (133)

- May 2024 (157)

- June 2024 (145)

- July 2024 (136)

- August 2024 (154)

- September 2024 (212)

- October 2024 (255)

- November 2024 (196)

- December 2024 (143)

- January 2023 (182)

- February 2023 (203)

- March 2023 (322)

- April 2023 (297)

- May 2023 (267)

- June 2023 (214)

- July 2023 (212)

- August 2023 (257)

- September 2023 (237)

- October 2023 (264)

- November 2023 (286)

- December 2023 (177)

- January 2022 (293)

- February 2022 (329)

- March 2022 (358)

- April 2022 (292)

- May 2022 (271)

- June 2022 (232)

- July 2022 (278)

- August 2022 (253)

- September 2022 (246)

- October 2022 (196)

- November 2022 (232)

- December 2022 (167)

- January 2021 (182)

- February 2021 (227)

- March 2021 (325)

- April 2021 (259)

- May 2021 (285)

- June 2021 (272)

- July 2021 (277)

- August 2021 (232)

- September 2021 (271)

- October 2021 (304)

- November 2021 (364)

- December 2021 (249)

- January 2020 (272)

- February 2020 (310)

- March 2020 (390)

- April 2020 (321)

- May 2020 (335)

- June 2020 (327)

- July 2020 (333)

- August 2020 (276)

- September 2020 (214)

- October 2020 (233)

- November 2020 (242)

- December 2020 (187)

- January 2019 (251)

- February 2019 (215)

- March 2019 (283)

- April 2019 (254)

- May 2019 (269)

- June 2019 (249)

- July 2019 (335)

- August 2019 (292)

- September 2019 (306)

- October 2019 (313)

- November 2019 (362)

- December 2019 (318)

- January 2018 (291)

- February 2018 (213)

- March 2018 (275)

- April 2018 (223)

- May 2018 (235)

- June 2018 (176)

- July 2018 (256)

- August 2018 (247)

- September 2018 (255)

- October 2018 (282)

- November 2018 (282)

- December 2018 (184)

- January 2017 (183)

- February 2017 (194)

- March 2017 (207)

- April 2017 (104)

- May 2017 (169)

- June 2017 (205)

- July 2017 (189)

- August 2017 (195)

- September 2017 (186)

- October 2017 (235)

- November 2017 (253)

- December 2017 (266)

- January 2016 (164)

- February 2016 (165)

- March 2016 (189)

- April 2016 (143)

- May 2016 (245)

- June 2016 (182)

- July 2016 (271)

- August 2016 (247)

- September 2016 (233)

- October 2016 (191)

- November 2016 (243)

- December 2016 (153)

- January 2015 (1)

- February 2015 (4)

- March 2015 (164)

- April 2015 (107)

- May 2015 (116)

- June 2015 (119)

- July 2015 (145)

- August 2015 (157)

- September 2015 (186)

- October 2015 (169)

- November 2015 (173)

- December 2015 (205)

- March 2014 (2)

- March 2013 (10)

- June 2013 (1)

- March 2012 (7)

- April 2012 (15)

- May 2012 (1)

- July 2012 (1)

- August 2012 (4)

- October 2012 (2)

- November 2012 (2)

- December 2012 (1)